Last Updated:March 05, 2026, 22:08 IST

Behind the high-tech veneer of the Meta AI assistant lies a massive 'ghost workforce' in Nairobi, Kenya

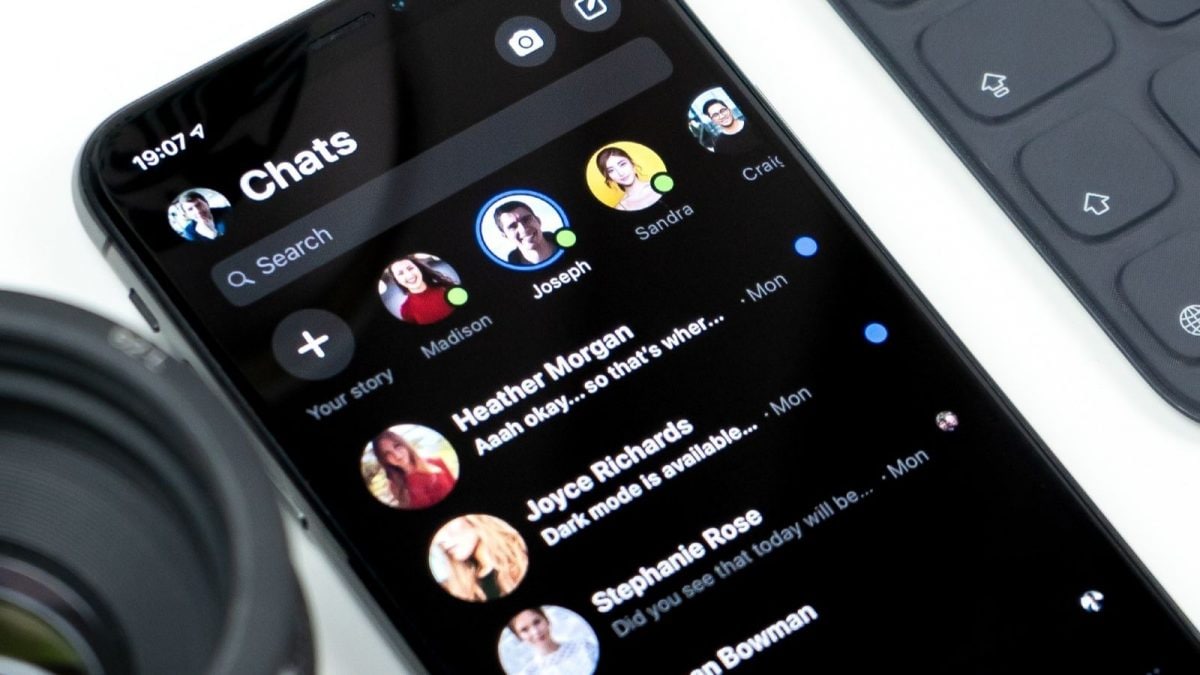

The revelation that European and Indian user data is being exported to Kenya—a country that currently lacks an 'adequacy decision' for data protection under the EU’s GDPR—has triggered a firestorm. Representational image

When Mark Zuckerberg paced the stage at Meta Connect to showcase the second-generation Ray-Ban Meta smart glasses, he pitched a future where an “all-seeing" AI assistant lives on your face, ready to translate signs, identify monuments, or capture memories. However, a searing investigation by Swedish newspapers Svenska Dagbladet and Göteborgs-Posten has exposed a far more voyeuristic reality.

Behind the high-tech veneer of the Meta AI assistant lies a massive “ghost workforce" in Nairobi, Kenya. These contractors, employed by the firm Sama, are not just cleaning up digital glitches; they are staring directly into the intimate lives of users who often have no idea they are being watched.

The Human Engine of the AI Illusion

The term “artificial intelligence" suggests a self-learning machine, but the current state of computer vision still requires a “human-in-the-loop." For Meta’s glasses to recognise a specific brand of coffee or a complex street scene, thousands of human annotators must manually label footage. This process, known as data annotation, involves drawing boxes around objects and transcribing audio to “train" the models.

In Nairobi, over 30 workers spoke to Swedish journalists about the harrowing nature of this work. Far from sanitised data points, the footage they review includes intimate sexual encounters, people undressing, and users visiting the bathroom. Because the glasses are designed to be “always ready", they frequently capture material accidentally—such as when a user puts the device down on a bedside table or forgets they are still activated.

The Failure of the Privacy Shield

Meta has long maintained that it filters data to protect user privacy before it reaches human eyes. The company claims that faces are automatically blurred and sensitive information is scrubbed. However, the Swedish investigation found that these safeguards are catastrophically inconsistent.

Workers reported that anonymisation algorithms frequently fail, particularly in low-light conditions or when subjects are in motion. This means that the “blurred" faces of family members, children, and strangers are often perfectly recognisable to the contractors. Perhaps more alarming is the exposure of financial data; reviewers have documented seeing bank cards, credit card numbers, and PIN entries captured as users looked at their wallets or used ATMs while wearing the devices.

The Transparency Gap in Eyewear Stores

A significant portion of the scandal centres on how these devices are sold. Reporters visited ten major eyewear retailers in Sweden, where sales staff frequently provided false or misleading information. Customers were told that data stays “locally on the app" or that recording only happens with explicit intent.

In reality, the investigation proved that the glasses’ AI functions cannot operate without communicating with Meta’s servers. Every time a user asks the AI to “look and tell me what this is", a video packet is sent to the cloud. Once that data leaves the device, users effectively lose control. Under the fine print of Meta’s terms of service, this media is fair game for manual human review to “improve the system."

Geopolitical Risk and the Regulatory Fallout

The revelation that European and Indian user data is being exported to Kenya—a country that currently lacks an “adequacy decision" for data protection under the EU’s GDPR—has triggered a firestorm. The UK’s Information Commissioner’s Office (ICO) and European lawmakers have already launched inquiries into whether Meta has breached informed consent laws.

For the workers in Nairobi, the “ghost work" is a psychological burden. Tasked with viewing graphic or highly private content for substandard wages, they are the invisible filters of the AI revolution. As Meta aims to triple its production of smart glasses by 2027, this investigation serves as a grim reminder: your “private" AI assistant is not a machine but a room full of strangers watching the world through your eyes.

First Published:

March 05, 2026, 22:08 IST

News tech Porn, PINs And Private Lives: What Meta’s Smart Glasses 'Ghost Workers' Are Really Seeing

Disclaimer: Comments reflect users’ views, not News18’s. Please keep discussions respectful and constructive. Abusive, defamatory, or illegal comments will be removed. News18 may disable any comment at its discretion. By posting, you agree to our Terms of Use and Privacy Policy.

Read More

1 month ago

1 month ago