A draft of the guidelines says AI groups seeking business with the government must grant the US an irrevocable licence to use their systems for all legal purposes.

Earlier, Pentagon formally designated Anthropic a "supply-chain risk" and barred government contractors from using the AI firm's technology in work for the US military. (File photo)

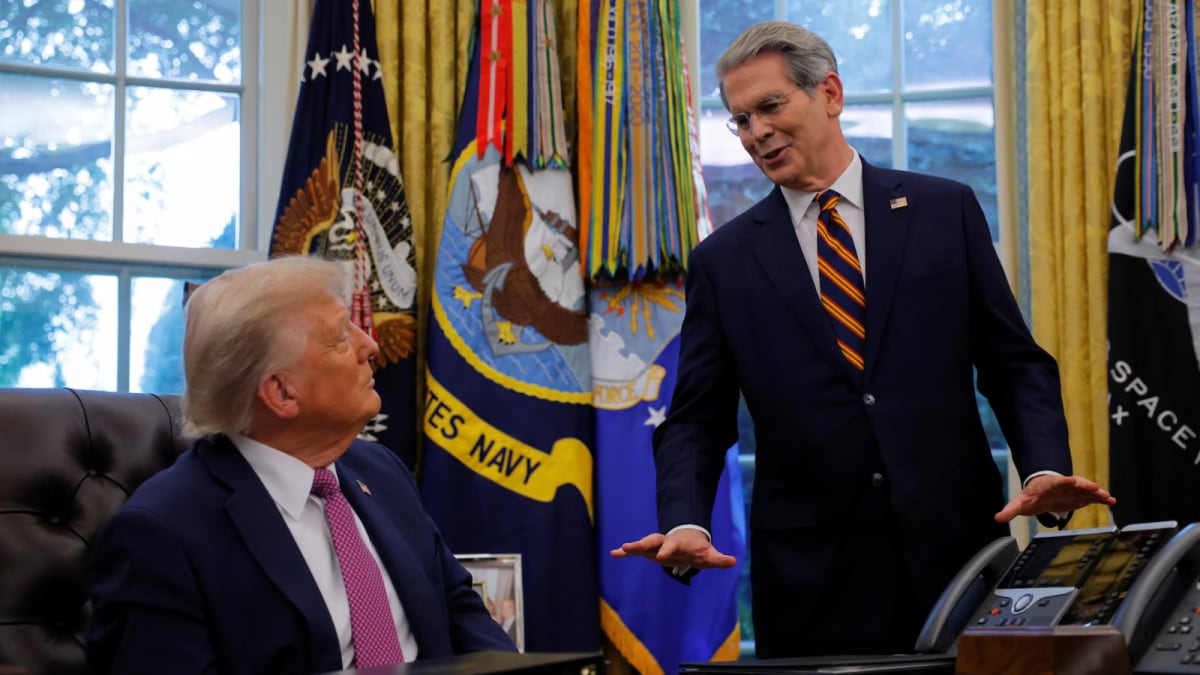

The Trump administration has drawn up strict rules for civilian artificial intelligence contracts that would require AI companies to allow "any lawful" use of their models amid a stand-off between the Pentagon and Anthropic, the Financial Times reported on Friday.

The report comes a day after the Pentagon formally designated Anthropic a "supply-chain risk" and barred government contractors from using the AI firm's technology in work for the US military. That move followed a months-long dispute over the company's insistence on safeguards that the Defense Department says went too far.

A draft of the guidelines reviewed by the FT says AI groups seeking business with the government must grant the US an irrevocable licence to use their systems for all legal purposes.

The guidance from the US General Services Administration (GSA) would apply to civilian contracts and is part of a broader government-wide effort to strengthen AI services procurement, the FT reported, adding that it mirrors measures the Pentagon is considering for military contracts.

The White House and the GSA did not immediately respond to requests for comment.

The draft from the GSA also mandates that contractors "must not intentionally encode partisan or ideological judgments into the AI systems data outputs," the FT reported.

It also requires companies to disclose whether their models have been "modified or configured to comply with any nonUS federal government or commercial compliance or regulatory framework," the FT reported.

- Ends

Published On:

Mar 7, 2026 09:19 IST

Tune In

2 hours ago

2 hours ago